If you followed along with my writing, you might have noticed I’ve working with Windows Virtual Desktop (WVD) a little. You can read more about setting up WVD, setting up the management tool or how to setup FSLogix.

By default, the WVD template deploys the session hosts in an availability set. This will spread the workload between different fault domains and update domains in a single Azure DC. This protects you from local failures such as top of rack switch outages, but doesn’t protect you from full DC outages (think flood, hurricane…). Availability Zones are different physical DCs in a single Azure region.

In this post I’ll walk you through how you can setup WVD in availability zones.

Design considerations

There are a couple of design considerations you have to consider when you think about deploying WVD in availability zones. There are two ways to considering deploying your host pools across availability zones:

- Deploy 1 host pool per zone active/passive; and redirect users to passive zone in case of zone failure.

- Deploy a single host pool that spans zones. Have spare capacity in all zones to handle increase in user load in case of zone outage.

There’s something to be said for each approach. In the first approach, you deploy a host pool statically to each zone, and control which user connects to which zone. However, you need to deploy double the infrastructure if you want to be able to quickly failover to the secondary zone.

In the second approach you spread a host pool across multiple zones. In case of a zonal outage, only 1/3 of your users would be impacted. If each zone is sized to host 50% additional capacity, you can absorb the additional load on the system.

If you are using FSLogix for profile containers, you also need to consider the high availability of your storage solution. If you are using Azure Files for your FSLogix backend, you can deploy that easily on a ZRS storage account. If you’re hosting your own file server, you need to make that available over multiple zones, by using for example Storage Replica using synchronous replication. If you’re using Azure NetApp files, that service isn’t AZ aware. However, it does provide a 99.99% SLA.

With that being said, let’s deploy a host pool in availability zones.

Setting up a host pool in Availability Zones

In this section we’ll deploy a host pool in availability zones. We’ll use the spreading methodology explained in the previous section. Meaning, a single host pool spanning multiple zones.

To setup a host pool in availability zones, we’ll need to make some changes to the ARM template that deploys the host pool. The default template is hosted on GitHub. I’ve uploaded the changed to my own GitHub profile.

In terms of changes, there are two changes to this ARM template:

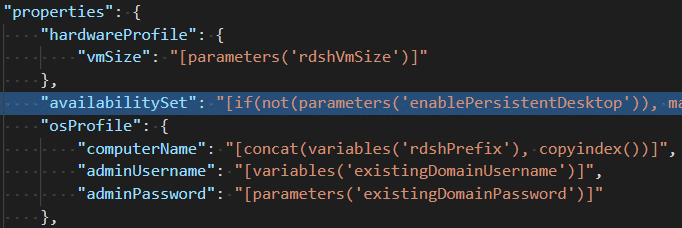

- The availabilitySet reference is deleted:

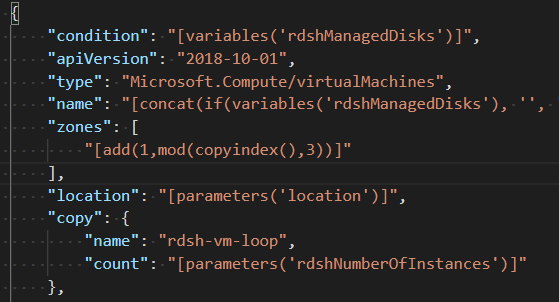

- We added a section for zones. We use a modulo division to rotate between zones, and add 1 (availability zones are 1-indexed, not 0-indexed)

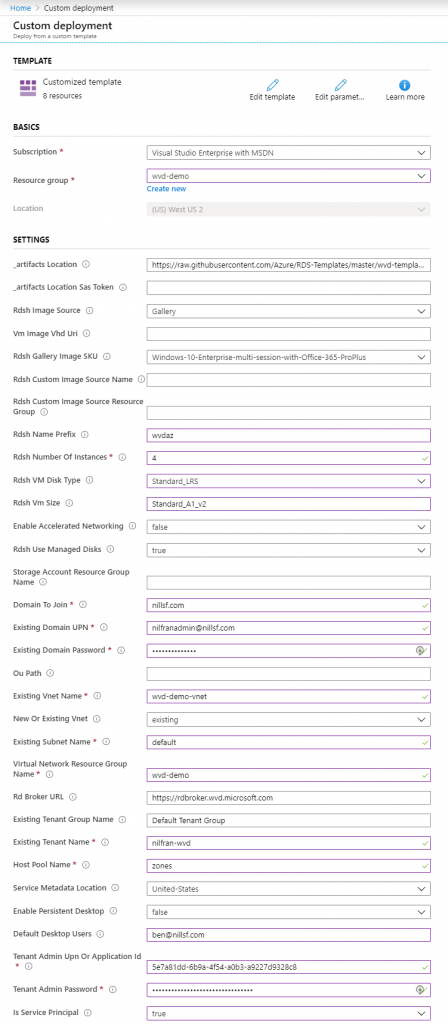

With these changes, we should be able to deploy this template in our Azure subscription. In my case, I’ll deploy this in my MSDN subscription. If you want to deploy this as well, there is a “Deploy to Azure” button on the GitHub repo.

The “Deploy to Azure” button will load the template in the Azure portal. You’ll now have to fill in the required settings.

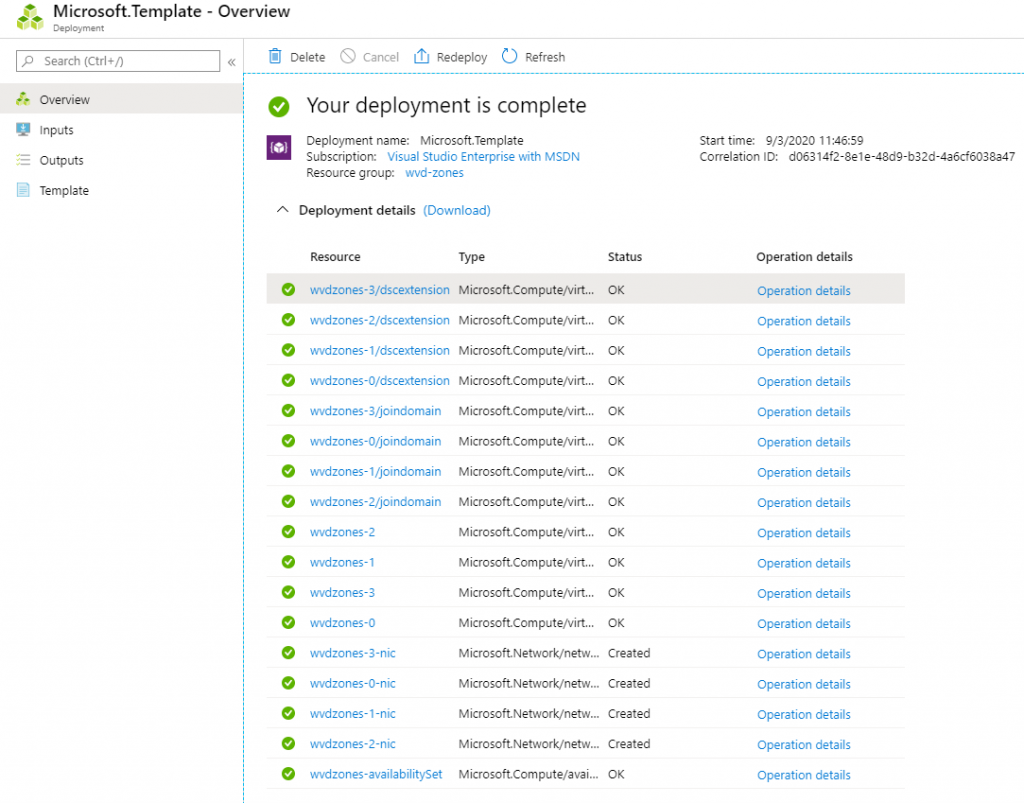

This will take about an half an hour to complete. Once it is complete, you should see the following result:

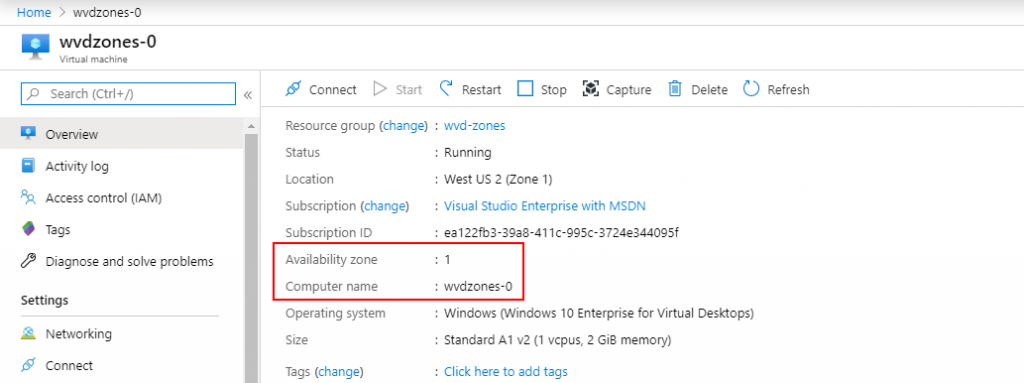

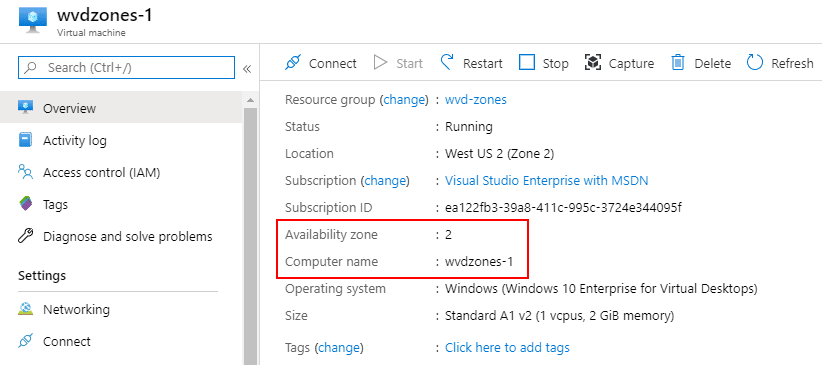

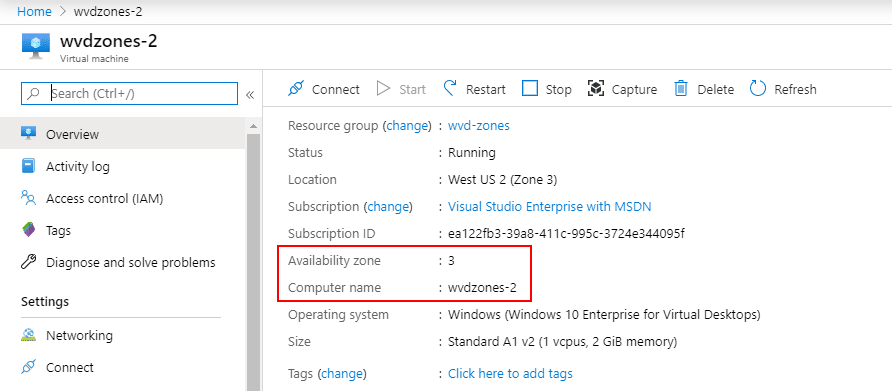

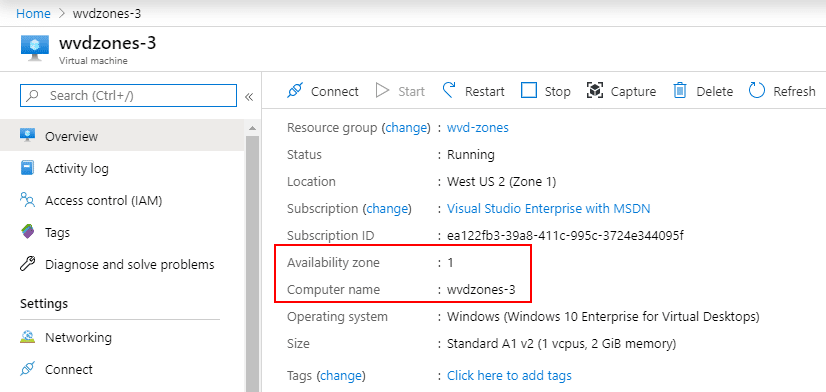

Let’s also have a look at the individual VMs, and their availability zones:

As you can see, the VMs are cleanly cycled through the different availability zones. The 4th VM is created again in zone 1.

Summary

This post describes how you can deploy a WVD host pool in availability zones. I showed you a template that will create a single host pool that will spread multiple zones.

There are a number of other considerations you need to take before rolling this out to production. Your file share location will become important in this design as well, as well as how you connect back to on-premises (if you plan do this). If you have a firewall deployed in front of internet traffic, that also needs to be setup highly available.

On a closing note, this template uses regular VMs. Although you can run WVD host pools on VMSS (and there are partners such as Nerdio who do this), the product team doesn’t officially support that now, which is why I’m sticking with regular VMs.