It’s already been about 6 months since Microsoft announced Dapr. Dapr, or Distributed Application Runtime, is an open source project with the goal to enable developers to write microservices. That’s a nice goal, and in this blog we’ll explore what this means.

Since its launch, I’ve heard more and more about Dapr. As with every launch, Dapr came with some announcements, Azure Friday episodes etc. What seemed uncommon to me was that Dapr seemed to keep people enticed even after its launch. I kept seeing people in my network tweet about it, and recently there was an episode of the Azure podcast about Dapr that convinced me I had to finally take a look, which I did today.

In this blog we’ll cover what Dapr is, how it is different from a service mesh and a quick demo of running Dapr. I’ve compiled a list of more resources at the end of this post.

Before we start, one disclaimer: I’m not at all an expert on Dapr, so please take what I write with some healthy skepticism.

What is Dapr

Dapr is a portable, event-driven runtime that makes it easy for enterprise developers to build resilient, microservice stateless and stateful applications that run on the cloud and edge and embraces the diversity of languages and developer frameworks.

Dapr documentation

The definition above comes from the Dapr documentation. It’s a mouthful, and I’ll try to deconstruct it using what I’ve learned in my day of research.

One of the main features of Dapr is that enables you to use external components (such as messaging infrastructure or state store) without having to write to a specific implementation of that external component. Your application would call Dapr with the action you want to take (e.g. read state from DB), and Dapr will translate this into an implementation of that action depending on your configuration (e.g. read state from CosmosDB).

Let consider this example: you need to read and write events to a message queue. Dapr allows you to do this without implementing a queue specific SDK. The queue implementation is handled by the Dapr runtime, and would even allow you to swap out implementation details. From an application perspective, you simply call Dapr with the action you need to take and Dapr will handle the translation.

This will also make it possible to write your application once, run it on multiple clouds and on each cloud simply have a different Dapr configuration file for that specific queue implementation. In practice you could use Event Hubs on Azure, and use Google pub/sub on GCP.

Current capabilities in Dapr (aka Building Blocks)

In it’s current version, Dapr has 6 capabilities, which it calls building blocks. When you’re building your application, you can pick and chose which ones of these you decide to use.

- Service Invocation: This allows you to do service to service calls and have Dapr handle error handling etc.

- State Management: State managements allows you to store key/value pairs to allow long running stateful services to fun in Dapr. State stores can be implemented as e.g. Azure CosmosDB, Azure Tables, AWS DynamoDB and more.

- Pub/sub messaging: This allows you to publish events and subscribe to topics. You could use Azure Event Hubs, Azure Service Sub, RabbitMQ and more.

- Resource bindings: Event-driven programming bindings for Dapr. There are a whole number of experimental implementations available.

- Distributed tracing: Dapr makes it easy to setup distributed tracing using OpenTelemetry. Distributed traces are essential to troubleshooting issues in microservices architectures, as a single end-user operation will trigger multi microservices to be executed.

- Actors: Dapr has taken a lot of the Service Fabric actor implementation code, and made that available outside of Service Fabric. Actor based programming is a specific programming style, that is useful for scenarios with high concurrency.

Where do you run Dapr?

Dapr makes use of the sidecar application pattern to implement its logic. This means you run Dapr as a separate process outside of your code application. You can run Dapr either on a local system as a process, or in a Kubernetes system as a sidecar container.

You can interface with this sidecar process/container using HTTP or gRPC. This means you do not need to use an SDK to interface with Dapr. The project has SDKs available to make interfacing with Dapr easier. SDKs are available (for now) in C++, Go, Java, Javascript, Python and .Net.

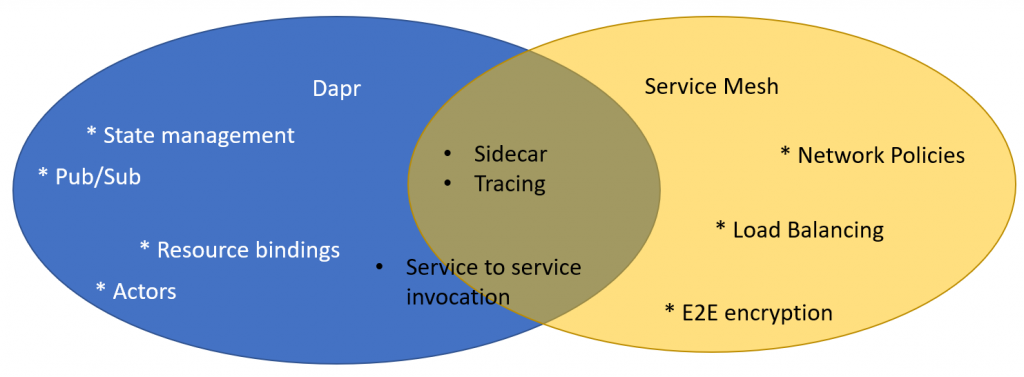

Comparing Dapr to a Service Mesh

Some of what Dapr promises to do sounds similar to what a service mesh (e.g. Istio / Linkerd) promises to do. Although there seemingly are some similarities, the goal of Dapr is distinct from the goal of a service mesh. (in my interpretation) The goal of Dapr is to make interacting with external resources easier, whereas the goal of a Service Mesh is to create a more reliable network. Dapr adds functionality to your application (e.g. interacting with a state store), whereas a service mesh only adds infrastructure capabilities.

They seem similar because both use a sidecar container approach to implement their capabilities. However, this is just a similar implementation, not an actual functional similarity. On a functional level, I believe there are two areas where Dapr and Service Meshes sort of overlap:

- Distributed tracing: Both Dapr and services meshes allow you to implement distributed tracing. This is a clear area of overlap in my opinion.

- Service to service invocation: I’m personally a bit confused about this one, because I see a similarity between the service to service invocation functionality in Dapr and how service meshes inject themselves in the traffic path of regular service to service communication. The functionality of Dapr is distinct from the perspective that Dapr provides an API on

/invoketo do service to service invocation. But once more functionalities such as retry logic get added to the service to service invocation, I expect more overlap with e.g. Istio Traffic policies.

The overlap area between Dapr and service meshes is rather small. Both have their function in an application, and both can work together. According to the Dapr documentation, Dapr can work with both Istio or Linkerd.

Running a Dapr demo

This demo will create a node and python app that will communicate to each other and persist state in a state store. We’ll first run the example, and then switch out the default Redis state store for Azure Tables.

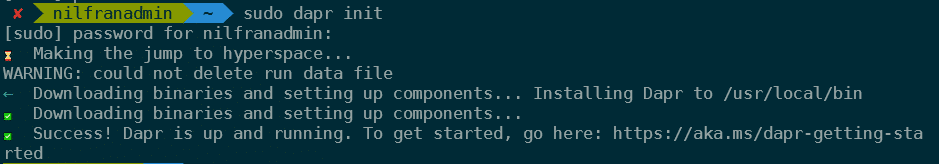

To get started with the demo, we’ll first install the Dapr CLI. In my case, I’ll be running this on my WSL setup. You will need Docker installed as well for this to run.

wget -q https://raw.githubusercontent.com/dapr/cli/master/install/install.sh -O - | /bin/bashTo then start Dapr on your local machine, you can run:

sudo dapr init

Now, we can clone the demo and navigate in the directory:

git clone https://github.com/dapr/samples.git

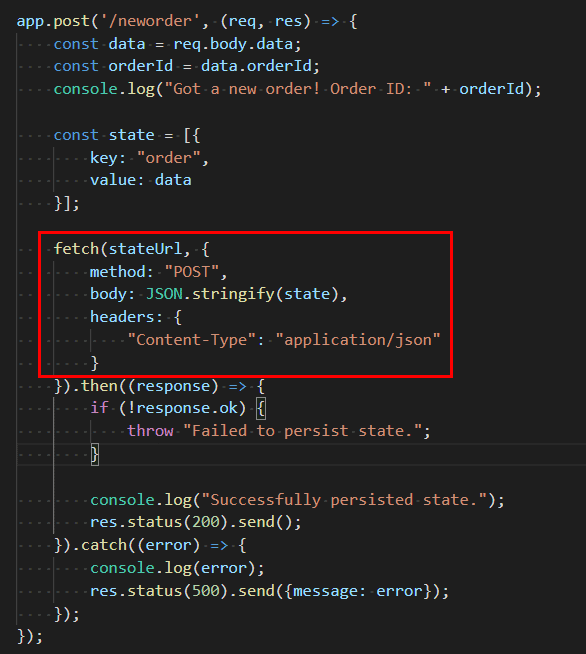

cd samples/1.hello-worldWe’ll start with the node application. This will receive orders. There’s a /neworder path that takes a new order and persists it. If you look at this code, you’ll see that we’ll do a post operation against the stateUrl (which is Dapr in this case), but don’t provide any details such as database, connection string etc.

To run this app, use these commands:

npm install

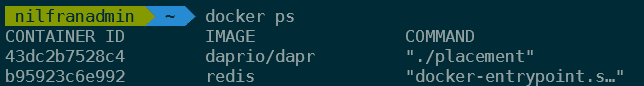

dapr run --app-id nodeapp --app-port 3000 --port 3500 node app.jsThis demo app uses a Redis database as a state store. This DB is running as a container on your machine. To see this, run docker ps

We can now create orders against this API, using:

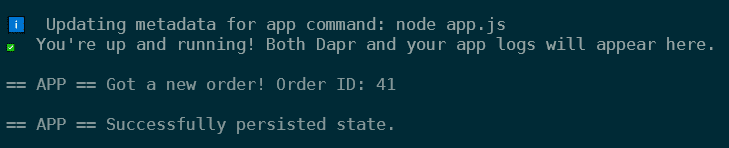

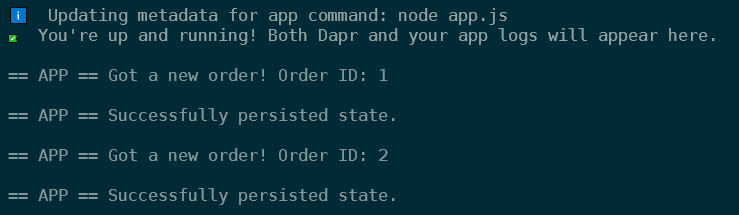

dapr invoke --app-id nodeapp --method neworder --payload '{"data": { "orderId": "41" } }'Here, we’re using the Dapr CLI to post messages. We’re posting the message to the Dapr endpoint, not directly to the NodeJS app. After running this, we’ll see this appear in the logs of our app:

We can also post to Dapr using a curl command (which the Dapr CLI does on our behalf).

curl -XPOST -d @sample.json -H "Content-Type:application/json" http://localhost:3500/v1.0/invoke/nodeapp/method/neworderThere is also a Python app in this example that will post a message per second. To add this application to what’s already deployed, we can run:

pip3 install requests

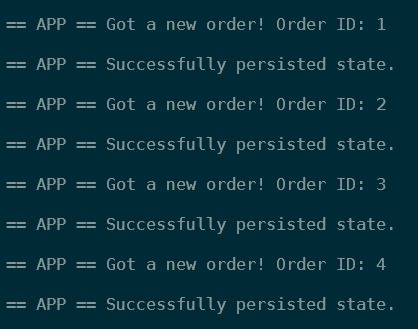

dapr run --app-id pythonapp python3 app.pyAnd this will indeed a request per second:

Changing Redis to Azure Table

The Github demo didn’t include this, but I wanted to check out how easy it would be to replace Redis with Azure Tables (I first wanted to try CosmosDB, but didn’t have one running, so I switched to tables).

The configuration of the state-store happens in the file components/statestore.yaml:

apiVersion: dapr.io/v1alpha1

kind: Component

metadata:

name: statestore

spec:

type: state.redis

metadata:

- name: redisHost

value: localhost:6379

- name: redisPassword

value: ""

- name: actorStateStore

value: "true"Let’s see if we can change this to Azure Table. Looking at the definition, we’ll need the account name, access key and table name.

apiVersion: dapr.io/v1alpha1

kind: Component

metadata:

name: statestore

spec:

type: state.azure.tablestorage

metadata:

- name: accountName

value: nfwestus2

- name: accountKey

value: VE5JBoIm...d5MQ==

- name: tableName

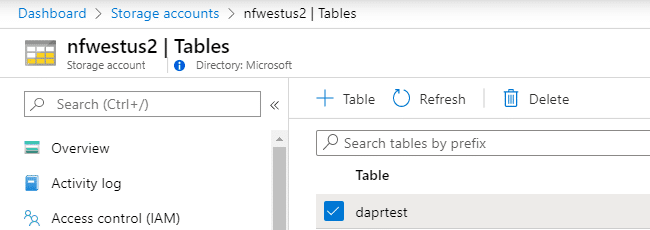

value: daprtest

This file is present twice in the directory, once in the parent and once in another subdirectory. Just to be sure, make sure to copy your statestore.yaml in the components subdirectory as well.

And now, let’s run both apps again.

dapr run --app-id nodeapp --app-port 3000 --port 3500 node app.js

dapr run --app-id pythonapp python3 app.py

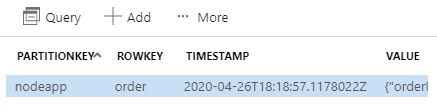

And, we can see the order appear in Table storage:

Summary

Dapr looks really promising. I can see it be really easy to use it to abstract away implementation details from developers. You don’t have to care about messaging infrastructure or state store, you can simply write you application and program against a common abstraction layer.

In this post we had a look at Dapr, compared it to service meshes and ran a first demo. In this demo, we saw how easy it was to replace a Redis store with Azure Tables.

More resources

- Announcement blog

- Github

- Getting started

- Azure Friday part 1 and part 2 with Aman Bhardwaj and Yaron Schneider

- Azure community live with Mark Fussell