Ignite was held last week, and there were a bunch of amazing announcements from our product teams. The list would be to big to list here. Microsoft did release an e-book with a list of all the announcements. You can read about all announcements here.

One of the updates that caught my eye was the Azure Front Door. It was mentioned during Yousef Khalidi’s keynote on networking announcements and there was a dedicated 20-minute highlight session dedicated to the technology.

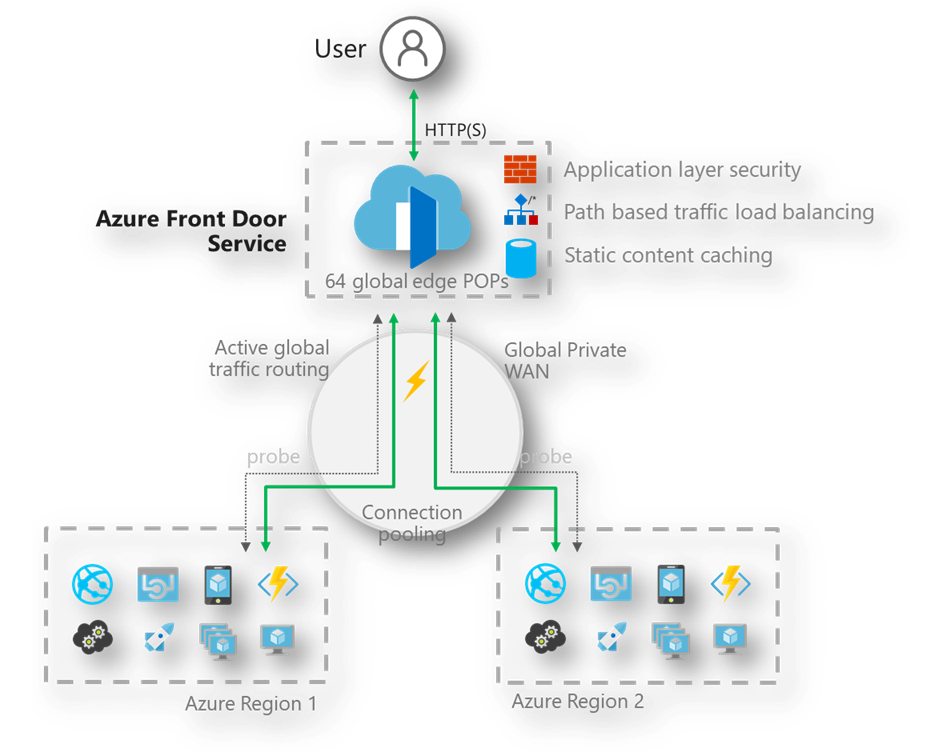

You might wonder what the difference is between AFD and a CDN (good question): A CDN typically stores (caches) static content of your websites, while you still serve the dynamic traffic from your own web servers. AFD actually sits in the traffic path. Your users connect to AFD, who can optionally cache static content and AFD pulls the content from your web servers. The advantage is that the TCP connection setup is handled close to your end user, and that you benefit from Microsoft’s global network to pull traffic from your webservers to the AFD endpoint via our high-speed network.

I wanted to try it out, and was amazed by how easy it was to setup, use and how well it all worked. Specifically, I wanted to test a couple things: (1) How hard is it to setup? (2) Does AFD share hosts closest to the end-user? (3) How does it deal with application failures?

Let me walk you through it.

Infrastructure setup for this test

So, to test this out I setup three things:

- A webapp in West US 2 (close to home)

- A webapp in West Europe (close to where home used to be)

- A VM in UK (to test connections to West Europe)

With those three things set up, I could start setting up AFD. The setup of AFD was really straightforward:

- You choose a domain name.

- You configure your back-end pool.

- You setup a routing rule.

Once this is setup, it takes about 2-3 minutes for AFD to become available. Conclusion to question (1): takes less than 5 minutes to setup.

Testing out some traffic

Initially, I got round-robin traffic from West US2 and West Europe, but after about 2 additional minutes, I only started hitting the West US2 endpoint (as expected).

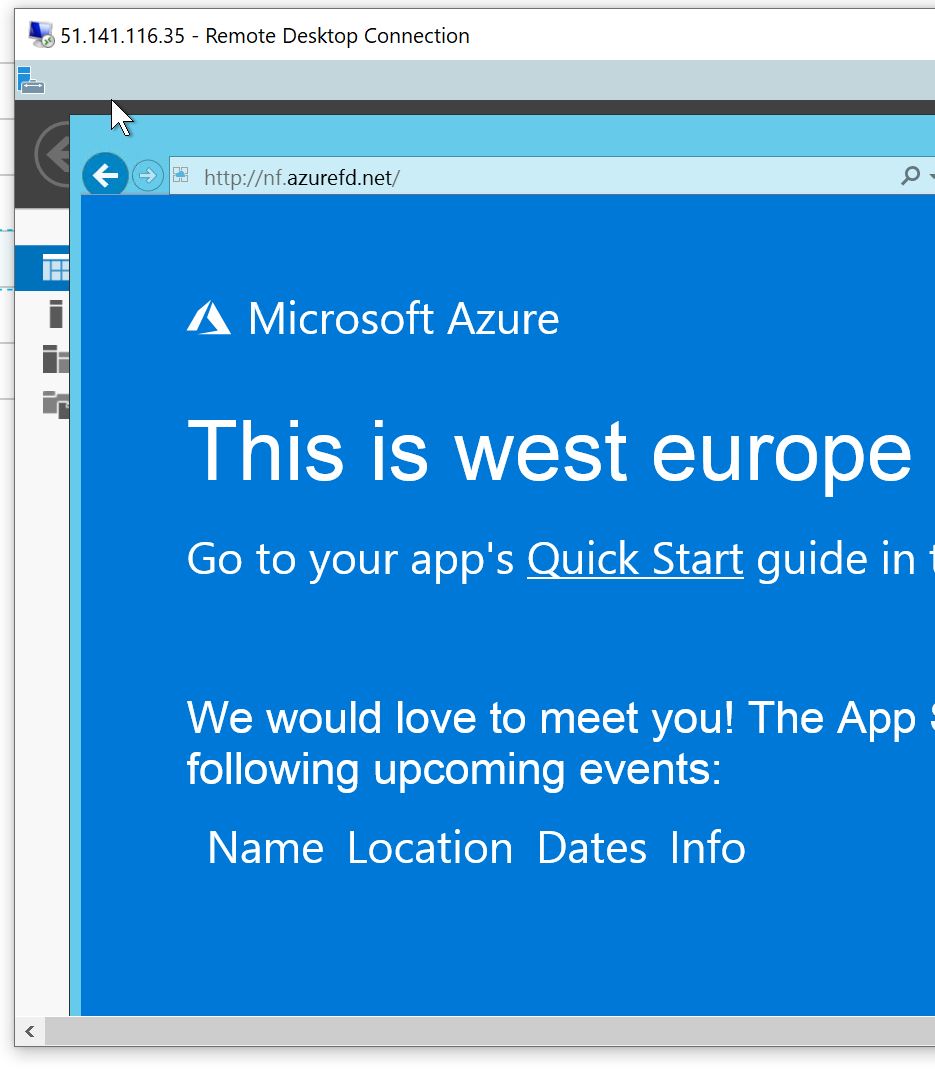

From my server in the UK, I kept hitting the West Europe endpoint – as expected.

Conclusion to question (2): it serves traffic close to the end-user; without needing much configuration.

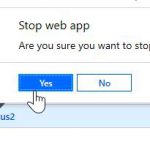

I then decided to shut down my local webapps and see how fast traffic is rerouted. This took about 30 seconds. Initially, a couple of seconds for the health probe to fail and then 1 request to fail for AFD to reroute the traffic. Bringing the healthy host back online took about 1 minute to start serving local traffic again. This is a lot quicker than a typical DNS TTL, which is typically about 5 minutes; and is out of your control (as some providers overwrite DNS TTL to a higher value automatically).

Conclusion to question (3): it fails over quickly, and also fails back seamlessly.

Conclusion

Well, this was a very easy and successful test with a new service. I see a lot of benefits in using the Azure Front Door service: it makes serving traffic local to your end user very straightforward, helps with failing over traffic seamlessly, and can provide some performance benefits. I didn’t test out some of the advanced features such as rate limiting and custom WAF rules; but you can implement these centrally and deploy globally seamlessly.

One word of caution here before closing this down: Azure Front Door helps with routing front-end web traffic globally, it doesn’t help with your app or data tier. You will still need a replication strategy for your data tier. We have multiple services in Azure that can help in that regard, from Azure SQL replication to CosmosDB to even a managed MySQL DB that can be replicated.

If you’re interested to see performance benchmarks, check out my friend Karim Vaes’s blog on the same topic.

I liked AFD during my tests, what do you think?